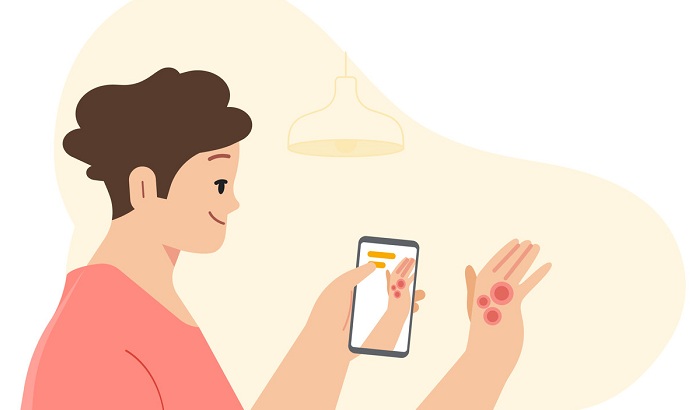

At Google’s annual I/O developer conference , the company previewed its AI-powered dermatology assist tool, which is a web-based application. Users upload three well-lit images of the skin, hair or nail concern from different angles. The tool then asks a series of questions about the user’s skin type, how long they’ve had the issue and other symptoms that help the tool narrow down the possibilities.

The AI model analyzes this information and draws from its knowledge of 288 conditions to provide a list of possible matching conditions, according to Bui.

For each matching condition, the tool will show dermatologist-reviewed information and answers to commonly asked questions, along with similar matching images from the web. Users can either save their results, delete them or donate them to Google’s research efforts.

Users’ data are stored securely and encrypted in transit and at rest, Bui said. “Google doesn’t use the data to target ads,” she said.

It marks Google’s first consumer-facing medical device, but the company is currently not seeking approval from the Food and Drug Administration (FDA) for the technology, Bui said.

“This is currently not a clear-cut path for this technology” to receive FDA approval, she said.

The goal is to give users access to authoritative information so they can make a more informed decision about their next step, said Bui.

Bui, a physician, said many patients wait too long to see a doctor for a dermatologic condition. Bui said one of her patients detected a mole on her toe but felt fine and waited to get care before seeing a doctor who did not have expertise in dermatology.

“This patient lived two hours from the nearest dermatologist. So she drove two hours, got a skin biopsy and the mole biopsy came back as melanoma,” she said. “Skin diseases are an enormous global burden, and every day millions of people turn to Google to research their skin conditions.”

Two billion people worldwide suffer from dermatologic issues, but there’s a global shortage of specialists. While many people’s first step involves going to a Google search bar, it can be difficult to describe what you’re seeing on your skin through words alone.

It took three years of machine learning research and product development to build the AI-powered dermatologist assistant, according to Yuan Liu, Ph.D., technical lead on the project.

To date, Google has published several peer-reviewed papers that validate the AI model and more are in the works, according to a blog post.

In a study published in Nature last year, the AI system was shown to be as good as a dermatologist at identifying 26 skin conditions, and more accurate than the primary care physicians and nurses in the study.

A recent paper published in JAMA Network Open demonstrated that Google Health’s AI tool may help clinicians diagnose skin conditions more accurately in primary care practices, where most skin diseases are initially evaluated.

The new search tool will launch later this year in markets outside the U.S. Recently, the AI model that powers the tool passed clinical validation, and the tool has been CE marked as a Class I medical device in the European Union.

Health disparities can make building machine learning models challenging as the data can be biased, Liu said.

With this in mind, the Google Health team built the AI model to account for factors like age, sex, race and skin types—from pale skin that does not tan to brown skin that rarely burns, according to the blog post.

“We developed and fine-tuned our model with de-identified data encompassing around 65,000 images and case data of diagnosed skin conditions, millions of curated skin concern images and thousands of examples of healthy skin—all across different demographics,” Liu and Bui wrote in the post.

The tech giant has been pushing further into using AI technology for health and wellness. Google and sister company Verily worked together to develop a machine learning-enabled screening tool for diabetic retinopathy, a leading cause of preventable blindness in adults.

More recently, Google moved into health tracking using smartphone cameras to monitor heart and respiratory rates. Google Health added new features to its Google Fit app that enables users to take their pulse just by using their smartphone’s camera.

And the tech giant has shown an interest in sleep health as its second-generation Nest Hub includes a sleep sensing feature that uses radar-based sleep tracking in addition to an algorithm for cough and snore detection. It marked Google’s first foray into the health and wellness space with its smart display products.